Built from publicly available source. Clean-room implementation. Zero copied code.

Claude Code's architecture was published on GitHub. The internet went wild. While everyone was reading the source, we studied the patterns and built something better.

SynthCode is the production-grade agent framework Claude Code should have been: lightweight, model-agnostic, TypeScript-first, MIT licensed.

The best agent frameworks aren't walled gardens. They're composable, transparent, and work with any model you choose. SynthCode extracts the battle-tested agentic patterns from publicly available source and combines them with the best ideas from the open-source ecosystem.

- Zero runtime dependencies -- only peer deps on

@anthropic-ai/sdk,openai, andzod(all optional) - Works with Claude, GPT, Ollama -- swap providers with one line, or bring your own

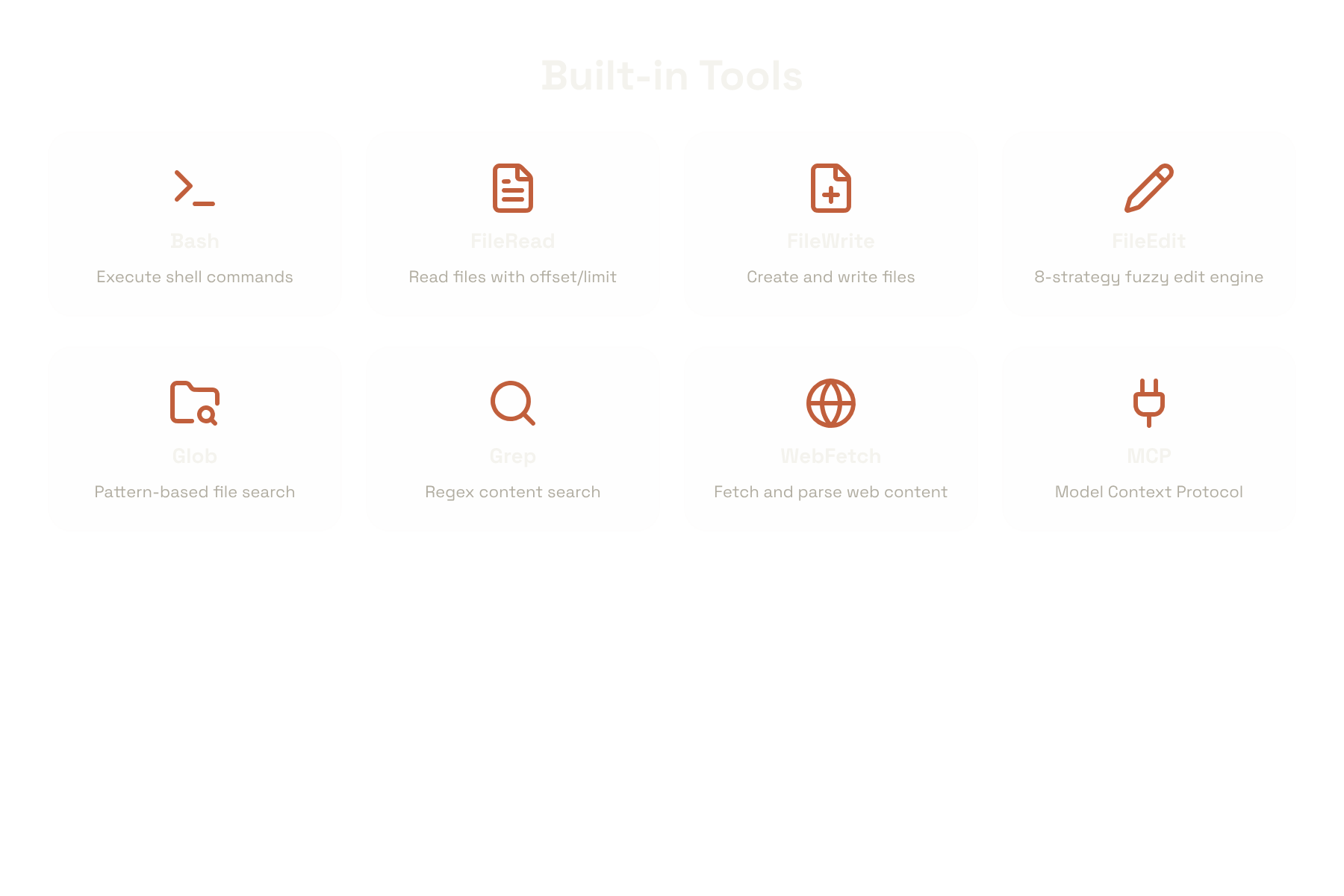

- 8 built-in tools -- Bash, FileRead, FileWrite, FileEdit (8-strategy fuzzy engine), Glob, Grep, WebFetch, MCP

- Battle-tested agent loop -- doom-loop detection, retry with backoff, token-aware compaction

- Permissions engine -- pattern-based allow/deny/ask with wildcard support

- Sub-agent delegation -- fork agents, nest them as tools, isolate permissions

- Structured output -- Zod-validated responses via

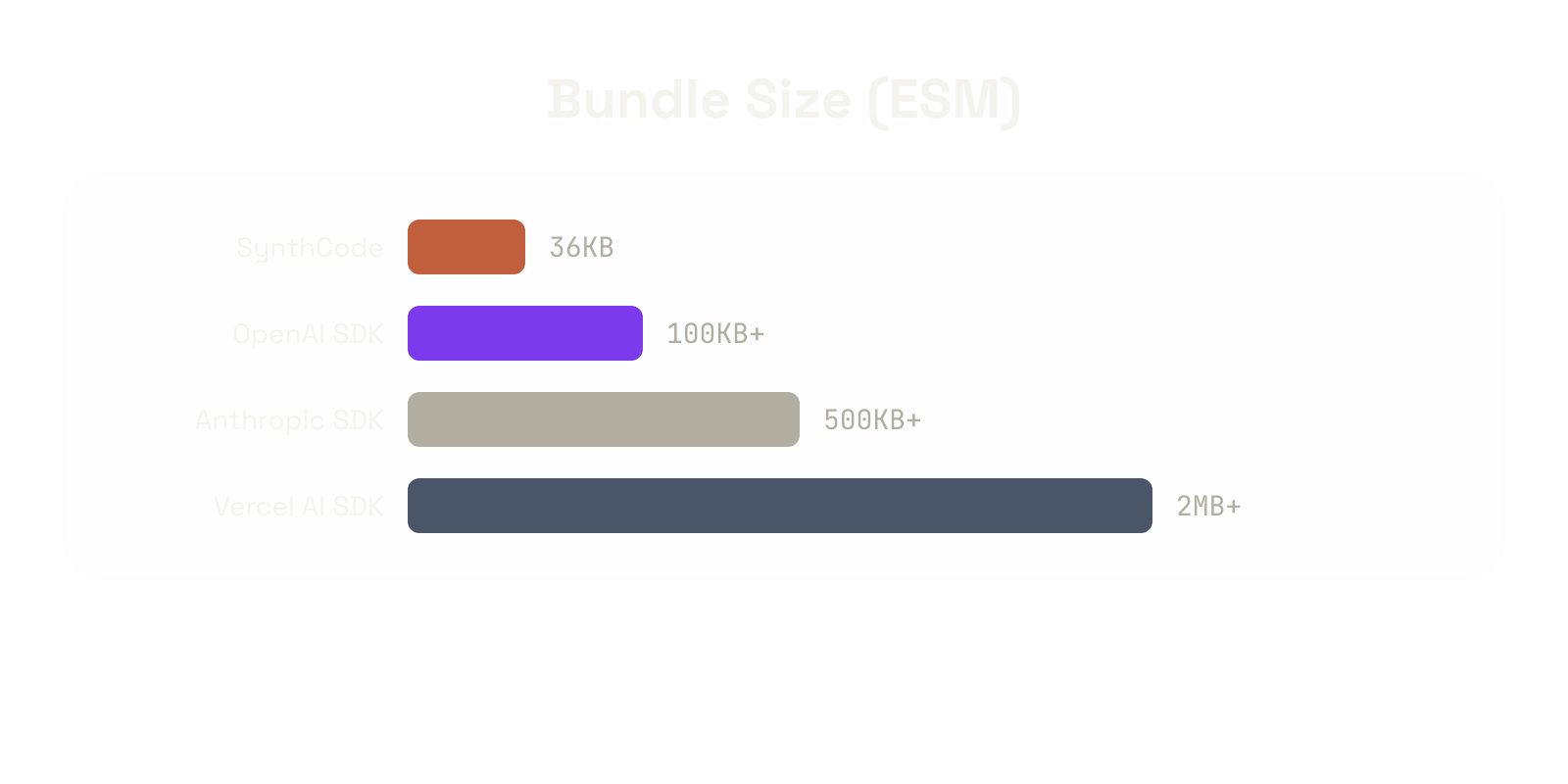

structured()orstructuredViaTool() - 34KB ESM bundle -- smaller than most single-file utilities

npx @avasis-ai/synthcode "Explain this codebase" --ollama qwen3:32bZero config. Auto-detects Ollama, Anthropic, or OpenAI from environment variables.

import { Agent, BashTool, FileReadTool, OllamaProvider } from "@avasis-ai/synthcode";

const agent = new Agent({

model: new OllamaProvider({ model: "qwen3:32b" }),

tools: [BashTool, FileReadTool],

});

for await (const event of agent.run("List all TypeScript files in src/")) {

if (event.type === "text") process.stdout.write(event.text);

if (event.type === "tool_use") console.log(` [${event.name}]`);

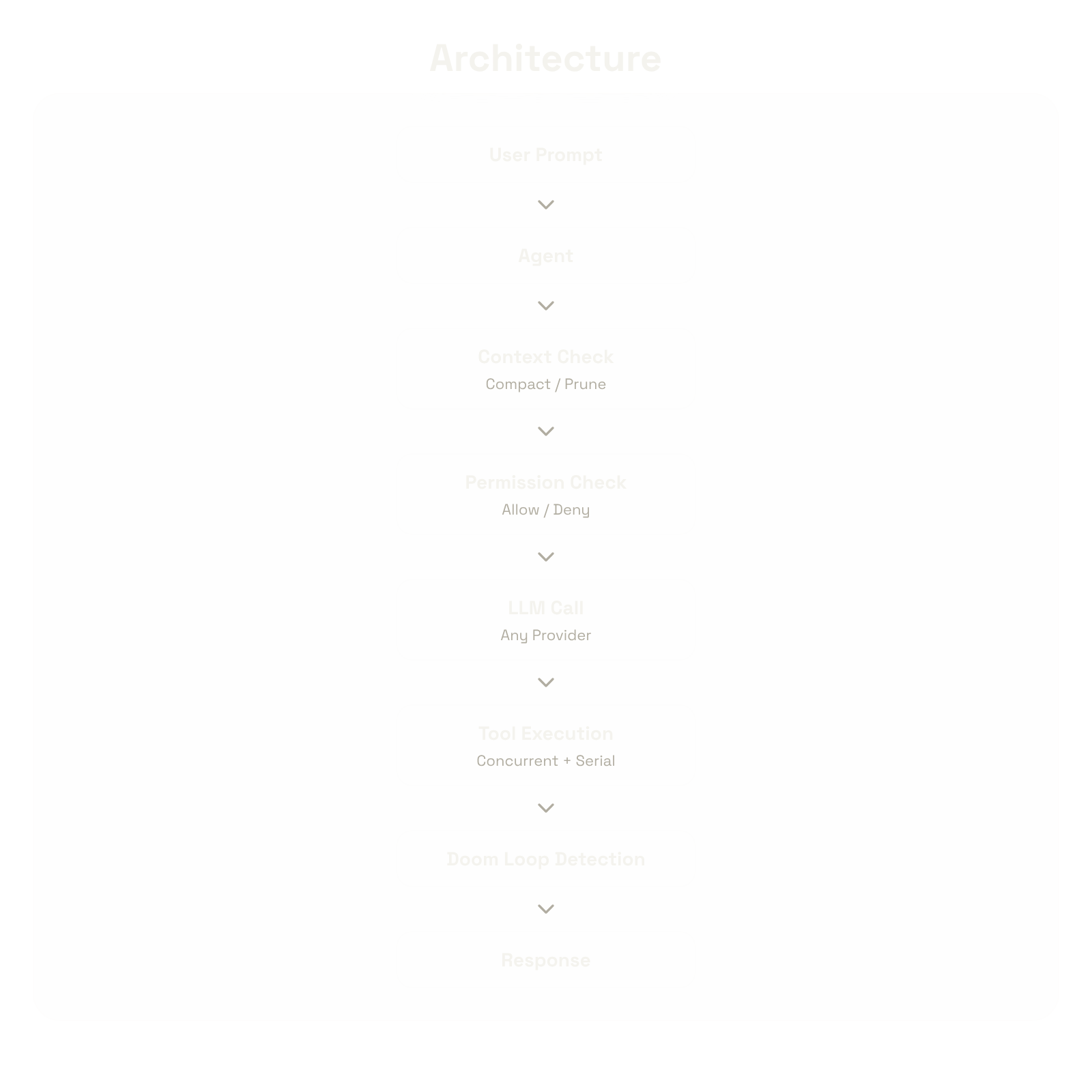

}User Prompt

|

v

[Agent] --> [Agent Loop]

|

+-- Context Check --> Compact / Prune

+-- Permission Check --> Allow / Deny / Ask

+-- LLM Call (any provider)

+-- Tool Orchestration (concurrent + serial)

+-- Doom Loop Detection

+-- Hook System (turn/tool/error/compact)

|

v

Response + Tool Results

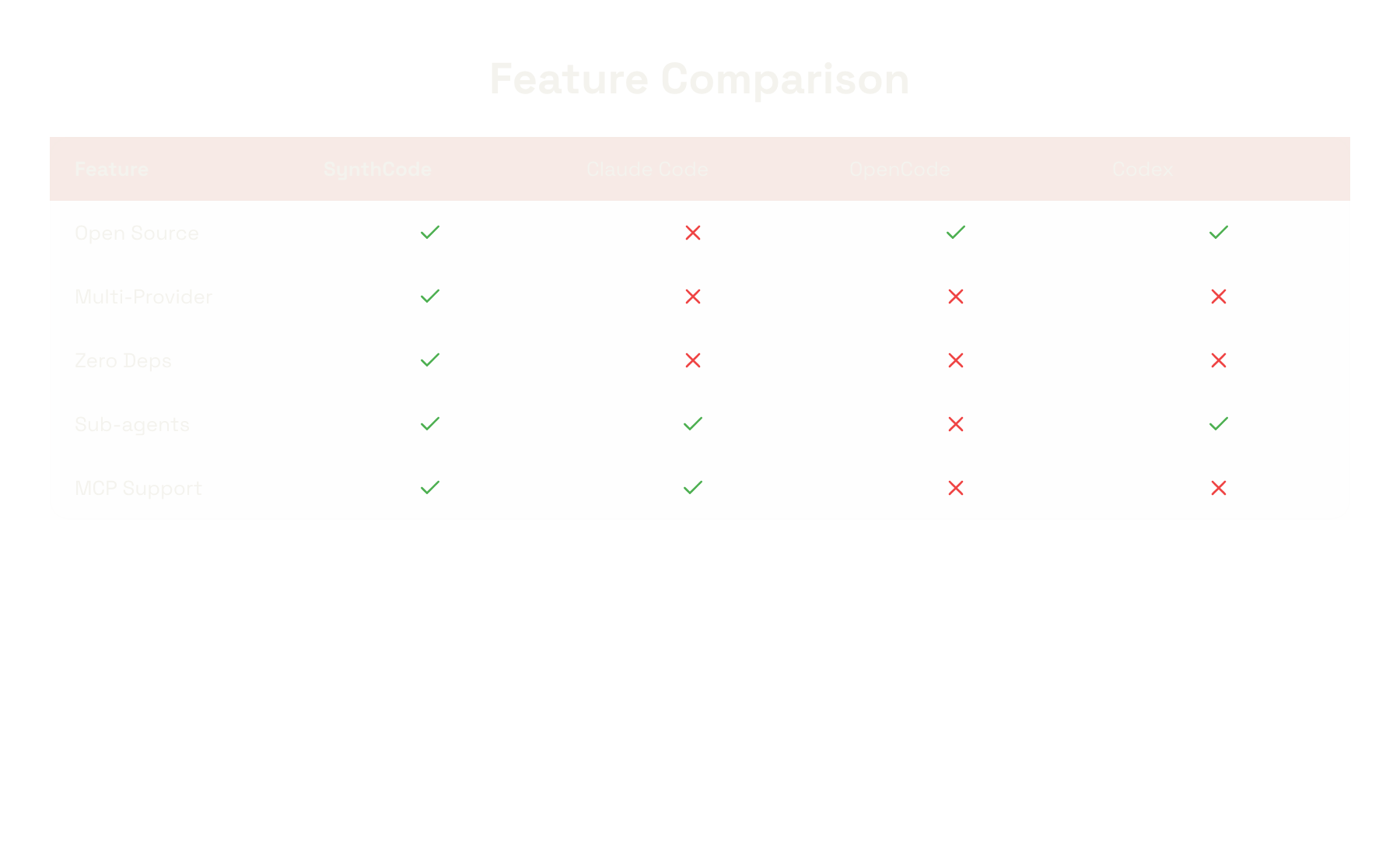

| Feature | SynthCode | Claude Code | OpenCode | Codex |

|---|---|---|---|---|

| Open Source | MIT | Proprietary | MIT | MIT |

| Multi-Provider | Claude, GPT, Ollama, Custom | Claude only | Claude only | Claude only |

| Built-in Tools | 8 | 12 | 5 | 6 |

| Fuzzy Edit Engine | 8 strategies | Unknown | 1 strategy | Unknown |

| Context Compaction | Token-aware | Yes | Basic | Yes |

| Doom Loop Detection | Yes | Unknown | No | Unknown |

| Sub-agent Delegation | Yes with isolation | Yes | No | Yes |

| MCP Support | Yes | Yes | No | No |

| Zero Runtime Deps | Yes | No | No | No |

When an LLM's edit doesn't match exactly, SynthCode tries 8 fuzzy matching strategies before giving up:

- Exact match

- Line-trimmed (ignore leading/trailing whitespace per line)

- Block anchor (match first/last line, fuzzy-match middle)

- Whitespace-normalized (collapse all whitespace to single spaces)

- Indentation-flexible (strip common indentation)

- Escape-normalized (

\n-> newline,\"-> quote) - Trimmed boundary (trim the search string)

- Context-aware (first/last line anchors with proportional middle matching)

// Claude

import { AnthropicProvider } from "@avasis-ai/synthcode/llm";

new AnthropicProvider({ apiKey: "...", model: "claude-sonnet-4-20250514" });

// GPT

import { OpenAIProvider } from "@avasis-ai/synthcode/llm";

new OpenAIProvider({ apiKey: "...", model: "gpt-4o" });

// Ollama (local, free)

import { OllamaProvider } from "@avasis-ai/synthcode/llm";

new OllamaProvider({ model: "qwen3:32b" });

// Custom

import { createProvider } from "@avasis-ai/synthcode/llm";

createProvider({ provider: "custom", model: "my-model", chat: async (req) => ({ content: [], usage: { inputTokens: 0, outputTokens: 0 }, stopReason: "end_turn" }) });const result = await agent.structured<{ files: string[]; totalLines: number }>(

"Analyze this project structure",

z.object({ files: z.array(z.string()), totalLines: z.number() }),

);const researcher = new Agent({

model: provider,

tools: [GlobTool, GrepTool, FileReadTool],

systemPrompt: "You are a code researcher. Find and analyze code patterns.",

});

const tool = await researcher.asTool({ name: "research", description: "Research the codebase" });

agent.addTool(tool);const agent = new Agent({

model: provider,

tools: [BashTool],

hooks: {

onToolUse: async (name, input) => {

console.log(`Tool called: ${name}`);

return { allow: true, input };

},

onError: async (error, turn) => {

console.error(`Error on turn ${turn}: ${error.message}`);

return { retry: error.message.includes("429"), message: "Retrying..." };

},

},

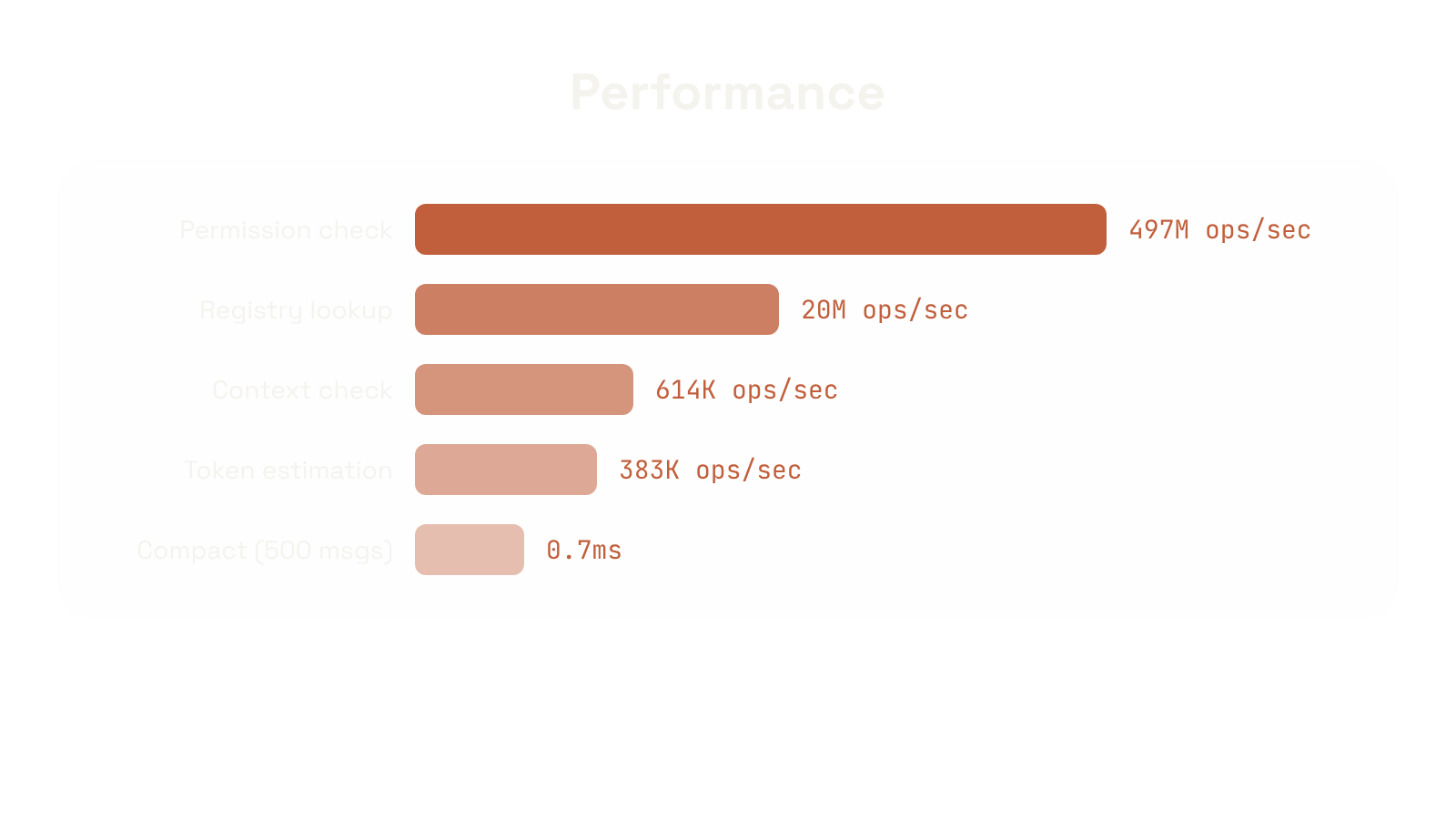

} as any);Measured on Apple M4 Pro, Node 22. These are real numbers from npx @avasis-ai/synthcode test:benchmark.

| Metric | Ops/sec |

|---|---|

| Token estimation (1KB) | 383K |

| Context check (100 msgs) | 614K |

| Tool registry lookup (10K) | 20M |

| Permission check (10K) | 497M |

| Compact (500 msgs) | 0.7ms |

| Framework | ESM | Gzipped |

|---|---|---|

| SynthCode | 34KB | 8.7KB |

| OpenAI SDK | 100KB+ | 25KB+ |

| Anthropic SDK | 500KB+ | 120KB+ |

| Vercel AI SDK | 2MB+ | 400KB+ |

70 concurrent agents on a single machine. Zero failures.

| Test | Agents | Model | Result |

|---|---|---|---|

| Basic tasks + math | 50 | qwen3.5:35b | 50/50 pass |

| Mixed operations | 20 | qwen3-coder-next:latest (79B) | 20/20 pass |

# Auto-detect provider from env vars

npx @avasis-ai/synthcode "What files are in this project?"

# Use Ollama (local, free)

npx @avasis-ai/synthcode "Refactor this function" --ollama qwen3:32b

# Use Anthropic

npx @avasis-ai/synthcode "Fix the bug" --anthropic claude-sonnet-4-20250514

# Use OpenAI

npx @avasis-ai/synthcode "Write tests" --openai gpt-4o

# Options

npx @avasis-ai/synthcode "prompt" --max-turns 20 --system "You are an expert" --jsonnpx @avasis-ai/synthcode init my-agent

cd my-agent

npm start "Hello world"Creates a ready-to-run agent project with TypeScript, Vitest, and all 8 tools wired up.

npm install @avasis-ai/synthcode zodPeer dependencies (install what you need):

npm install @anthropic-ai/sdk # for Claude

npm install openai # for GPT

# Ollama: no extra package neededimport {

Agent, BashTool, FileReadTool, FileWriteTool, FileEditTool,

GlobTool, GrepTool, WebFetchTool,

AnthropicProvider, OpenAIProvider, OllamaProvider,

ContextManager, PermissionEngine, CostTracker,

MCPClient, HookRunner,

InMemoryStore, SQLiteStore,

} from "@avasis-ai/synthcode";PRs welcome. This is MIT licensed. Build whatever you want.

MIT