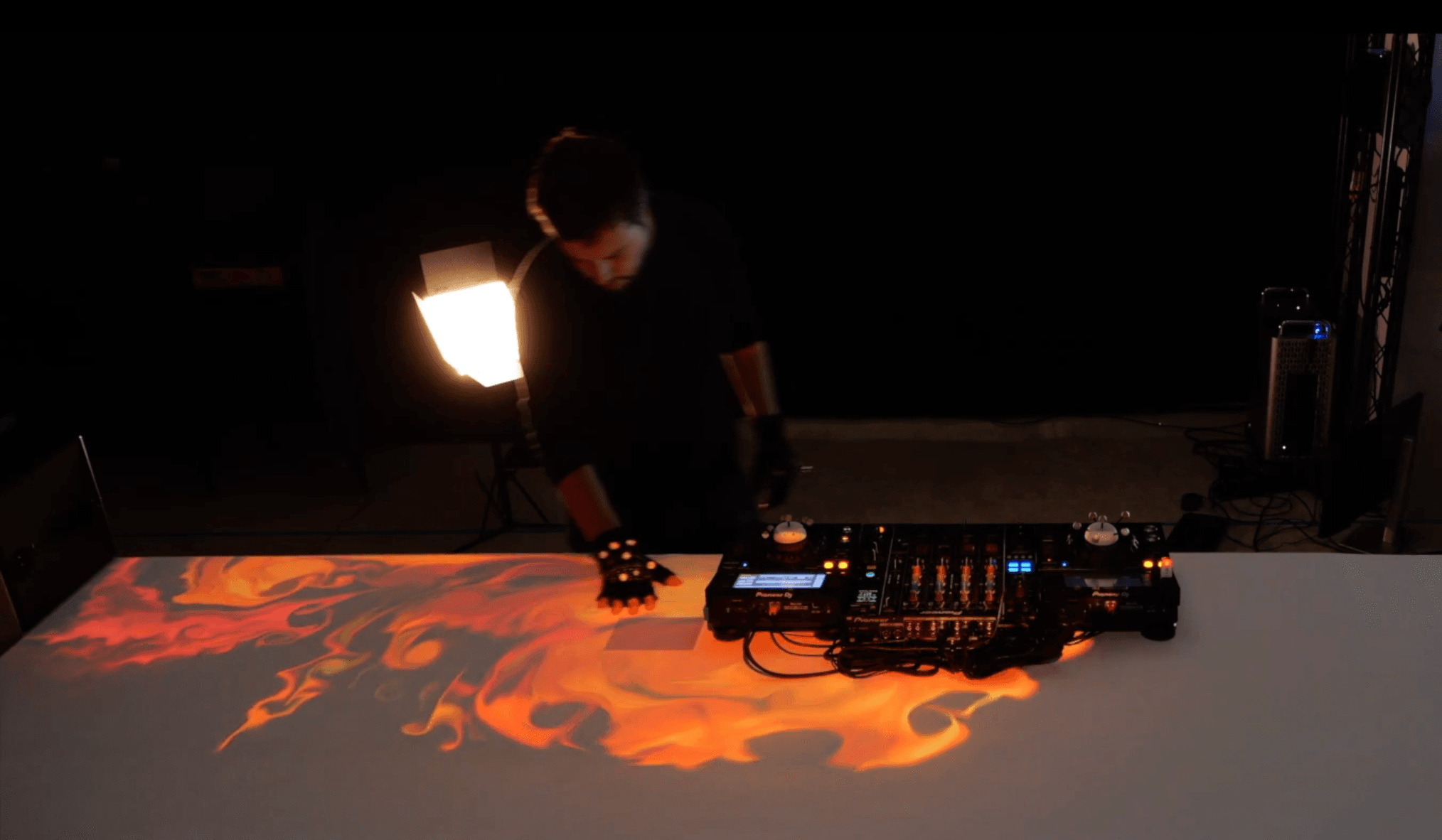

Welcome to DJESTHESIA! DJESTHESIA is an innovative interface for DJs that explores the integration of Tangible User Interfaces (TUIs). With DJESTHESIA, we aim to transform the DJ into both a performer and a performance.

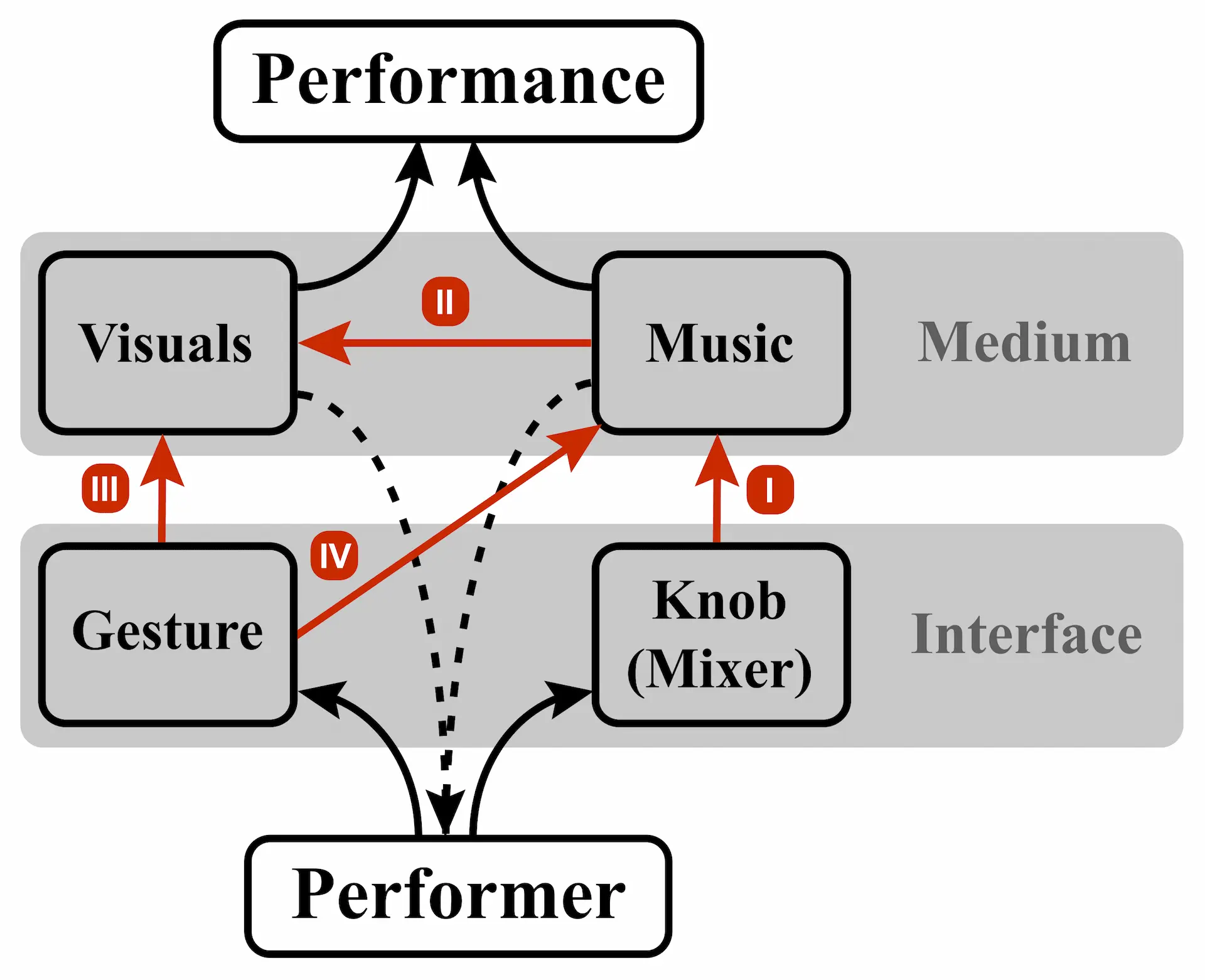

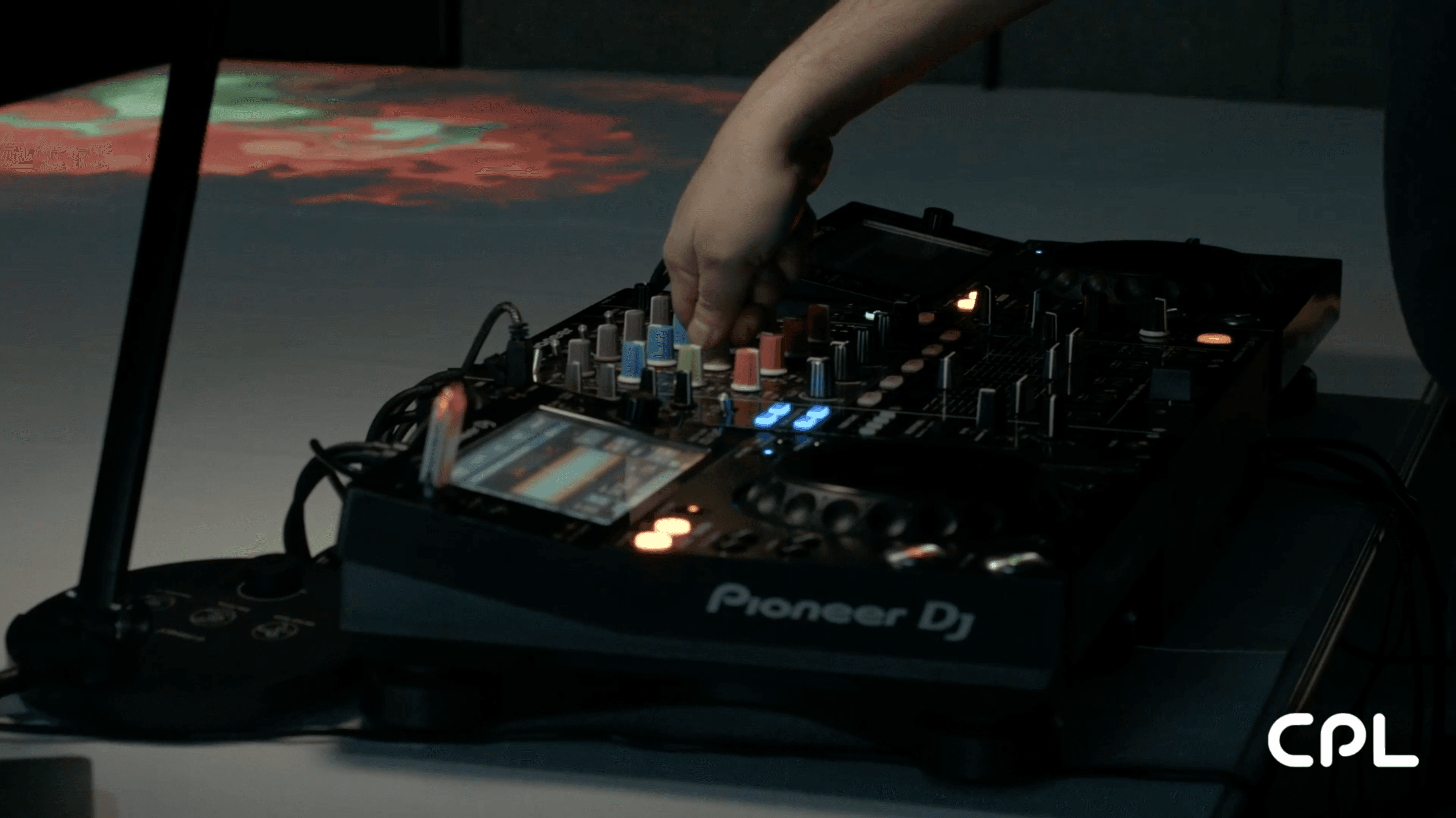

- Knob changes the music

The DJ changes the music by physically turning knobs. - Music changes visuals

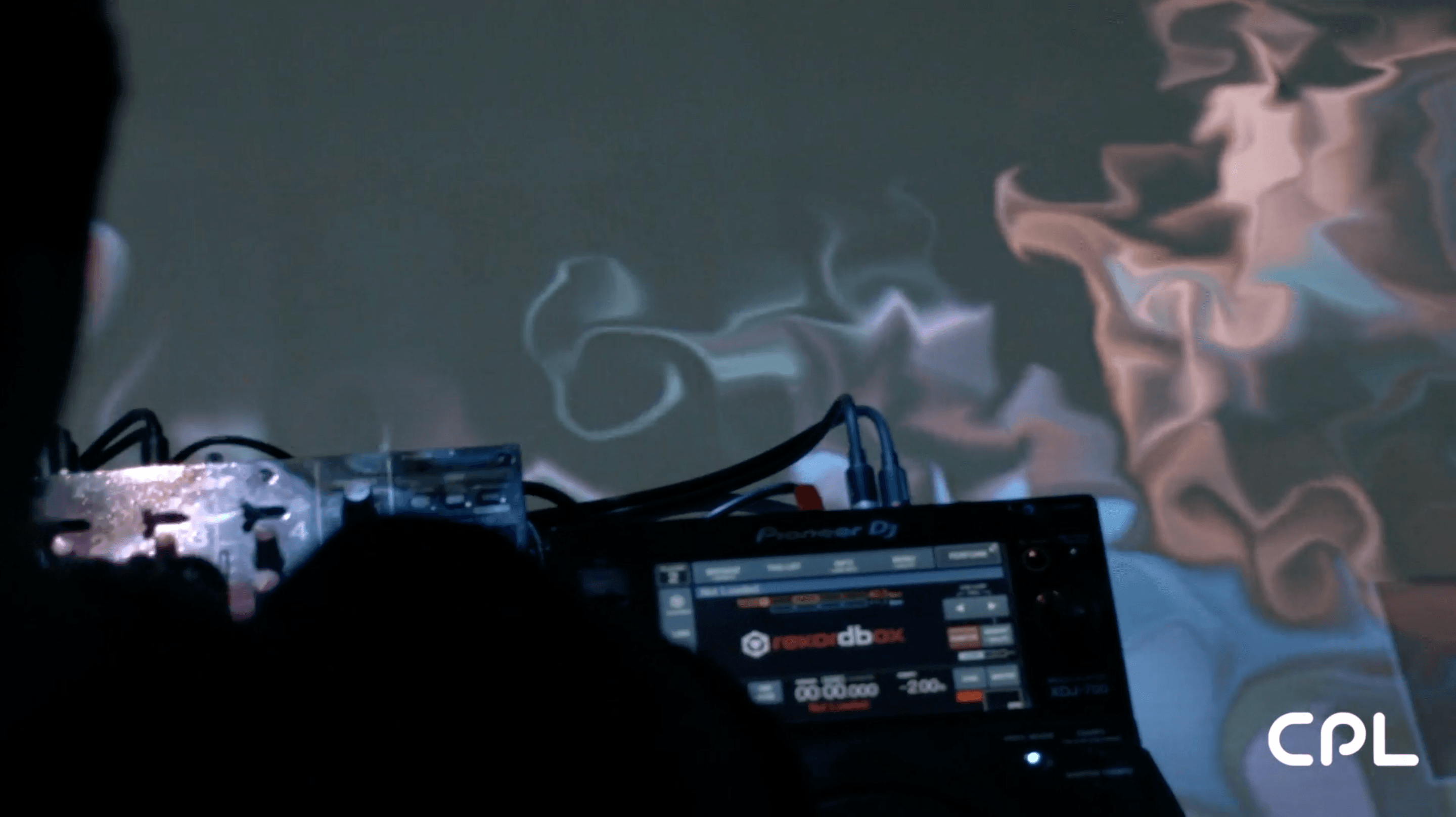

The visuals projected onto the DJ's workspace will change in response to low-latency audio. - Gesture changes visuals

The Motion Capture (MoCap) system tracks gestures of hands with MoCap marker gloves that interact with the generated visuals. - Gesture changes music

With two-handed gestures, a DJ can draw EQ curves to be applied to the music.

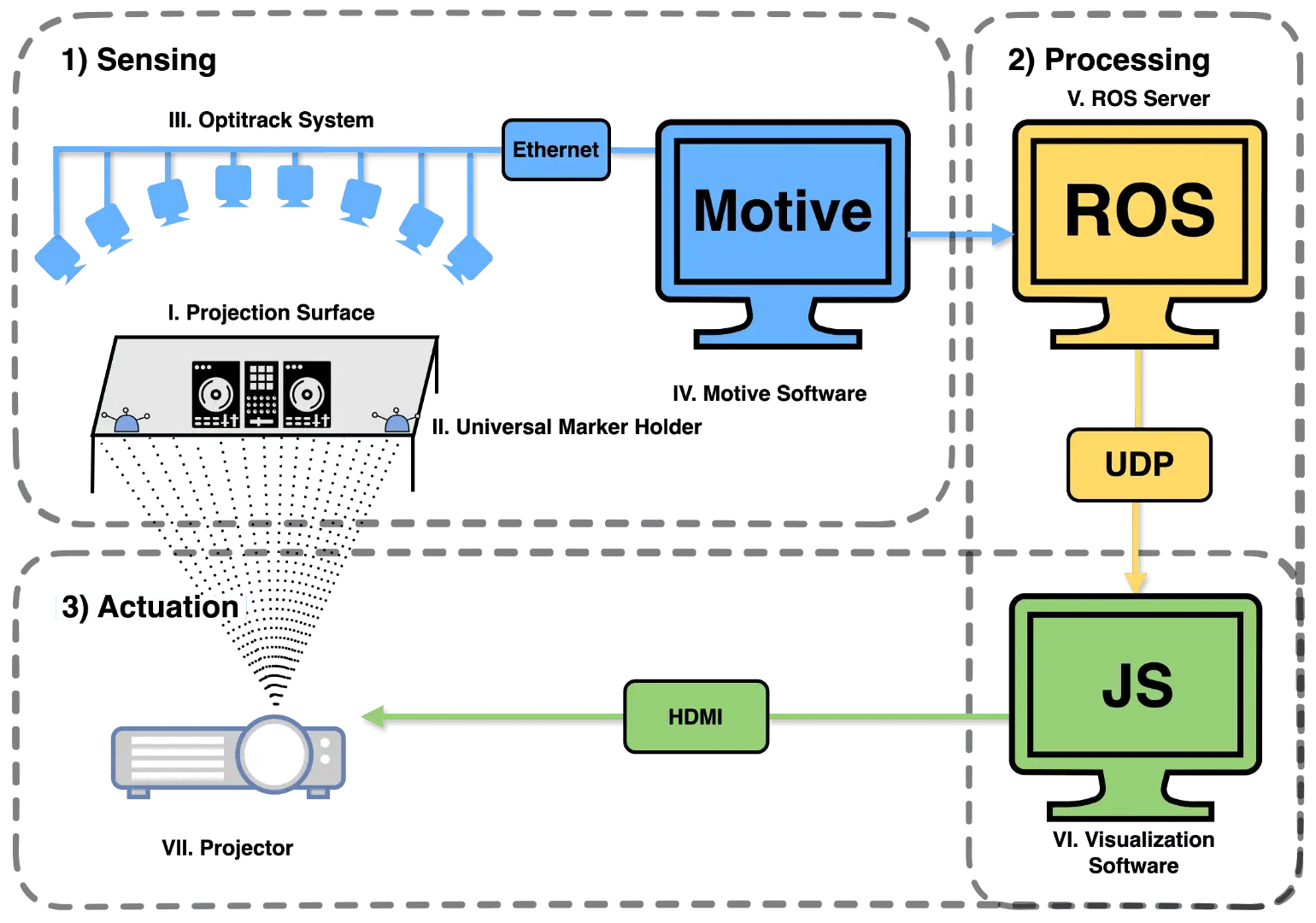

- Sensing: The system uses a motion capture camera system (OptiTrack) to track the position and orientation of the Universal Marker Holders (UMHs). UMHs are normally place inside a glove to track the hands and gestures of the DJ. However, they could also be located on top of the mixer or the decks to capture the interactions between the DJ and the equipment. This information is sent to a tracking software (Motive 3D) for processing.

- Processing: A server running the Robot Operating System (ROS) serves as an intermediary between the physical and digital information by processing the motion capture data and sending it to the visualization engine software via UDP.

- Actuation: The visualization engine is a WEBGL fluid simulation software programmed in Javascript (JS). This software receives the position and orientation coordinates of the UMHs and maps them into the computer mouse positions. Then, the mouse receives a triggering signal from the audio analyzer. If it passes the threshold, the mouse will trigger a click. If not, the mouse will just follow the UMH coordinates. Finally, this information is projected onto the table where the DJ is performing.

|  |

|  |

|  |

Watch the YouTube video about DJESTHESIA!

| Component | Description | Code |

|---|---|---|

| OptiTrack | Tracking system for the tangible objects | OptiTrack |

The paper for this project can be found here.

For any questions or inquiries, please contact us at eduardo.castello@ie.edu or sono.ieu2023@student.ie.edu.